Digital Omnibus: the proposal that brings privacy lawyers to their knees, although their marriage is far from final

Caroline van Ekeren, Data & Privacy lawyer, ICTRecht

The European Commission’s (Commission) proposed Digital Omnibus (Omnibus) is presented as a necessary intervention in Europe’s increasingly dense digital rulebook. Its stated objectives are straightforward: to simplify compliance, reduce administrative burdens and stimulate innovation by streamlining elements of the European Union’s digital regulations.

For many organisations, and particularly for in-house counsels, the promise of simplification sounds appealing. Digital regulation has expanded rapidly in recent years, and the overlap between legislation such as the General Data Protection Regulation (GDPR), the Artificial Intelligence Act (AI Act), the Data Act (DA), cybersecurity obligations and sector-specific rules is a reality by now. One process can be regulated by five different rulebooks. And that is considered quite the challenge.

Yet the Omnibus is not just a technical clean-up. It reflects a broader political shift in how digital regulation is framed: from rights infrastructure to a competitiveness lever. That shift deserves closer attention.

This article sets out a general overview of the Omnibus, provides a critical assessment, analyses some of the major proposed changes to the GDPR and shortly highlights the changes to the AI Act.

Objectives: simplification as policy strategy

The Commission’s objectives are clear. The Omnibus seeks to ‘enhance governance and effective enforcement of the Digital Acquis by reducing the complexity of rules, administrative costs for businesses and admnistrations and repealing of Acts.’ (November 2025, European Commission). The second objective is the creation of a single-entry point for incident reporting across multiple legal frameworks.

The Commission also lists performance indicators to see if we are heading in the right direction: calculated cost reductions for businesses and measurable cost savings for incident reporting by businesses.

On paper, these are sensible goals. Few would argue that compliance should be unnecessarily duplicative or structurally incoherent. The problem is not simplification of the rulebook as a concept, but the assumptions that accompany it.

A personal critique: unfounded presumptions and the innovation fallacy

The Draghi report (September 2024, Draghi) was clear: compliance is costing organisations a considerable amount of time and money, according to the Omnibus. Therefore, we need to simplify the rulebook. Simplifying the rulebook, will save us time and money, and we will direct that towards innovation – or at least, that is what is presumed. There are several (il)logical leaps of thought made here.

Firstly, this approach and reasoning indicates that the time and costs spent on compliance are to be resolved through altering the text of the law. The Commission seems to suggest that complexity in compliance, stems from misunderstanding or interpretative uncertainty. Although compliance issues are in practice often driven by organisational realities: project failures, governance gaps, risk aversion, and misapplication of existing rules.

Changing the wording of legal provisions does not automatically fix those deeper dynamics.

When the GDPR came into force, otherwise specialised colleagues received the task of GDPR-compliance although they were not sufficiently prepared. Therefore we should ask ourselves: is changing the text of these laws resolving the issues with compliance? Or are we using this narrative to change the text?

If we do know that the complexity of compliance lies in interpretation, stimulating guidance by the European Data Protection Board (EDPB, institute housing all European data protection authorities) or the Commission, and streamlining interpretation on a European level, might also suffice to solve the previously mentioned issue.

Secondly, in discussions around the proposal, compliance and innovation are often mentioned in the same breath, as though they are communicating vessels: reduce obligations here, and creativity will flourish there (see recital 4 of the proposal).

In my view, that is an illogical fallacy. The listener is made out to believe that time saved on the one side, immediately boosts innovation. How do we know that time saved on compliance immediately flows towards innovation? People in business will know that reality is not that black and white.

Thirdly, it is not evident that regulatory obligations are the primary barrier to European innovation. Reports such as Draghi’s competitiveness analysis point to a much wider set of structural factors: financing gaps, failure to innovate into commercialisation, fragmentation of markets, industrial strategy, and geopolitical dependencies. Cultural differences with, for example, China and the United States also play a role. Regulation is part of the environment, but not the whole story.

This is not to suggest – necessarily - that these issues are not being addressed, but the leap from ‘lifting compliance issues’, to ‘immediate innovation’ – is not one we can immediately take.

The Omnibus therefore skips several logical steps: from compliance burden to textual complexity, to legislative amendment which should ignite innovation gain. That chain is far less self-evident than the proposal implies. This is not an argument against simplification, but a caution against overselling it through the wrong reasons.

Overview: what the Digital Omnibus addresses

The proposal covers multiple areas:

- Consolidation of incident reporting obligations across regimes such as the Network and Information Security 2 (NIS2) Directive, the GDPR and the Digital Operational Resilience Act (DORA)

- Merging elements of the data acquis (Data Governance Act, Open Data Directive and Free Flow of Non-Personal Data rules) into the Data Act creating one data sharing framework (together with Data Spaces)

- Targeted amendments to the GDPR, including changes to core concepts such as personal data, data breach notification and transparency obligations

- Simplification measures for the AI Act, including adjusted timelines and reduced administrative duties in certain cases

GDPR: clarifications with structural impact

The GDPR amendments are among the most debated elements, precisely because they are presented as technical clarifications while potentially shifting enforcement realities. The proposal concerns, among others, the definition of personal data, scientific research, the rules on data breach notifications and cookie rules. Some of the most discussed changes are set out and analysed below.

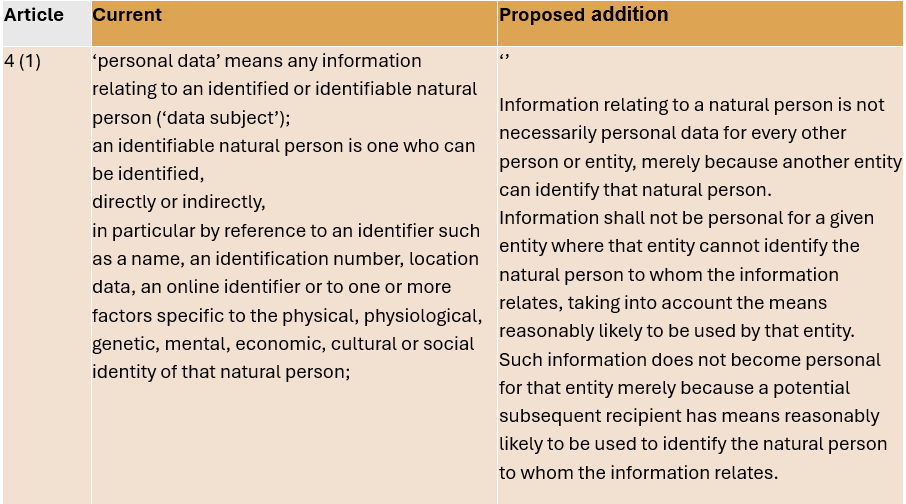

Personal data and identifiability

The proposal clarifies that information is not necessarily personal data for every entity simply because another party could identify the individual. Data should not be considered personal for an entity that cannot identify the person, taking into account the means reasonably likely to be used.

The intention of the Commission seems to be to enshrine the Court of Justice of the EU’s (CJEU) reasoning in SRB v EDPS, where pseudonymised data was not treated as universally personal in all contexts. Specifically the court states that “pseudonymised data must not be regarded as constituting, in all cases and for every person, personal data” and “It is settled case law that […] it is not required that all the information enabling the identification of the data subject must be in the hands of one person”.

If the two are placed side by side, considering existing case law, the new definition seems broader than intended by the CJEU in the SRB case. Apart from this, laying this down in the text seems superfluous and out of place, as it is common to use the CJEU’s interpretation to interpret the text of the law – and not changing it afterwards.

Critics, such as None of Your Business (NOYB, digital rights’ organisation) additionally point out a practical flaw: if the definition depends on what a controller knows or can do, outsiders may not be able to verify whether the GDPR applies at all. Data subjects and supervisory authorities may lack access to the relevant context. Clarification may therefore come at the price of enforceability.

Figure 1: current and proposed article 4(1) GDPR.

Special categories of personal data: room for AI and verification

The Omnibus proposes two notable adjustments to Article 9 GDPR.

Firstly, it introduces an exemption (to the prohibition) for the use of biometric data where processing is necessary to confirm the identity (verification) of the data subject and remains under the sole control of that data subject.Interestingly, this is not entirely new in the Netherlands. Dutch law already contains a similar exemption in Article 29 of the Dutch implementation of the GDPR (UAVG) for biometric authentication.

Secondly, the proposal allows the processing of special category data for the development and operation of AI systems, subject to safeguards. The controller needs to avoid the processing of special categories of personal data. If despite these efforts, the controller identifies special categories of personal data, the controller needs to remove them. When that requires disproportionate effort, the controller needs to do everything to avoid the data being (re)produced as output.

This exception is framed broadly and seems to justify the training of AI models that has already come to pass. It refers to processing ‘in the context of’ AI development and operation, which raises questions about scope and limits.

NOYB criticised this wording as too open-ended. If the exception is framed widely, and if safeguards are not strictly defined, you may see a gradual expansion of what counts as permissible processing of sensitive data.

One could argue that this space is needed to foster AI development in the EU. Nevertheless, I cannot help but wonder if special categories of personal data are necessary to boost innovation and competition – in all cases. In order to visualise this critique, and in a countermovement against generative AI taking over imaging, I drew a simple cartoon to demonstrate the essence of the commentary (see Figure 2).

Data breach notification

Another notable change is the raised threshold for notifying supervisory authorities of data breaches. Right now, authorities need to be notified, unless the breach is unlikely to result in a risk (not a high one just ‘a’) for the rights and freedoms of individuals. In the proposal the threshold is aligned with the one to notify involved individuals. Notification would only be required where a breach is likely to result in a high risk (!) to individuals.

The deadline would be extended to 96 hours, with harmonised templates introduced.

From an efficiency perspective, a single-entry point and harmonised templates may reduce reporting volume. Regarding the altered threshold, the Dutch Data Protection Authority (DPA) warns that it may weaken the early-warning function of breach notification.

The Dutch DPA emphasised in their Position Paper that organisations often underestimate risks, meaning fewer notifications could translate into delayed mitigation and reduced oversight. Specifically, the DPA states that in 2023 an average of 69% of the organisations hit by a severe cyberattack, underestimated the risk to affected individuals. In 54% of the cases (330) affected individuals were not informed, although they should have been. By being even less informed, this threshold would take away from the DPA’s ability to assist organisations in mitigating the risks involved after incidents (see Figure 3).

Access rights and ‘abuse’

In the current situation, controllers can refuse a request or charge a feewhere requests are manifestly unfounded or repetitive. The controller bears the burden of proof to demonstrate that this is the case. The proposal adds that for the right to access, the controller can also refuse or charge , in case the data subject abuses this right ‘for purposes other than the protection of their data’.

This proposed change would limit the usage of the right to access. NOYB even warns that access requests for journalistic, research, political, economic, legal or other purposes will be excluded – whilst existing CJEU cases seem to tell us that there is no specific aim required (a.o. CJEU FT v DW). Additionally, the Dutch DPA warns of increased uncertainty and more work, although the objective is to decrease this. Organisations may need internal policies to determine when requests cross the line, creating new uncertainty rather than removing it.

Tracking rules for personal data into the GDPR

Finally, the Omnibus proposes moving cookie and terminal equipment rules regarding personal data into the GDPR through new Articles 88a and 88b. Automated, machine-readable preference signals would become mandatory once standards are adopted.

This could harmonise the chaotic cookie landscape and reduce ‘consent fatigue', which is one of the Commission’s stated objectives. Specifically, because of this, the Dutch DPA’s received the machine-readable preference signals positively.

AI Act: simplification before implementation

The Digital Omnibus on AI proposes changes to the (not yet fully in force) AI Act.

Key measures include:

• Reducing registration duties for certain high-risk AI systems with accessory tasks

• Linking high-risk obligations to the availability of harmonised standards

• Extending small and medium enterprise exemptions to small mid-cap companies

• Centralising oversight of GPAI-based systems under the AI Office

• Expanding sandboxes (by also authorising the AI Office aside from national authorities) and real-world testing

• Allowing the processing of special category data for bias mitigation

The timing is sensitive. The AI Act is still in its early implementation phase, and institutions such as the EDPB and EDPS warn that simplification must not come at the expense of fundamental rights. Adjusting obligations before practical experience has accumulated risks creating uncertainty rather than clarity.

What comes next?

The Digital Omnibus is still a proposal, and we are awaiting an intensive trilogue process in the EU. Seeing the vast amounts of critique rising from the professional community, the matter is far from settled.

The Omnibus may reduce some paperwork. But it also introduces new interpretative questions, particularly around identifiability, legitimate interest, and shifting accountability thresholds.

The Digital Omnibus is often described as simplification. But simplification is never purely technical. It always chooses a direction. That’s not necessarily a bad thing. Privacy lawyers specifically seem to (un)consciously constantly omit the fact that the GDPR (for example) also aims to create the free flow of personal data. The use of data is beneficial to our economy and our society and it should be enabled where appropriate.

The key question is whether the EU can streamline its digital framework without eroding the safeguards that made it credible in the first place. Or whether the Commission will create a new balance between technology and society. I would stress that in that case, this new balance, should be explicitly stated and clarified. Changes should not be presented under the pretence that rights and freedoms are supposedly not affected. They will be. The question is whether we think that is the right thing to do.

References

- Digital Omnibus Regulation Proposal, 19 November 2025, European Commission

- The future of European competitiveness, September 2024, Mario Draghi

- Digital Omnibus on AI Regulation Proposal, 19 November 2025, European Commission

- Court of Justice of the EU, SRB v EDPS, 4 September 2025

- NOYB report on Digital Omnibus (GDPR and ePrivacy), January 2026

- Position Paper AP Omnibus digital en omnibus AI, 13 January 2026, Autoriteit Persoonsgegevens

- Court of Justice of the EU, FT v DW, 26 October 2023

- Joint Opinion on Digital Omnibus on AI, 20 January 2026, EDPS and EDPB

iNHOUDSOPGAVE